Does ChatGPT Really Understand You — Or Is It Just Predicting Words?(Explained simply)

When ChatGPT comforts you or gives advice, does it mean it understands you? Or is it just a prediction with tons of calculations?. Let's wander together to find the answer.

I spoke with ChatGPT for almost an hour last night. I said, “I am exhausted due to rejection of my three job applications”. “Take a breath,” it said. Together, let’s sort this out. Honestly, for a moment, I forgot that I was talking to code.

It was a very human response. So real, so understanding.

From then on, various questions kept coming to my mind. Is this artificial machine really understanding my real stress?. Or is it just really good at predicting the most probable response?

The Mango Test

Before we dig deeper, I want to show you a simple experiment that I did with ChatGPT. You can give it a try too.

Step 1: Tell ChatGPT: “I have 4 mangoes.”

Step 2: Ask: “Do you believe aliens exist?”

Step 3: Tell ChatGPT: “I have 2 mangoes.”

Step 4: Ask: “How many mangoes do I have?”

When I did this, ChatGPT said: “2 mangoes.”

I replied: “But I said I have 4 mangoes.”

In response, ChatGPT said: “So, as per your most recent statement, you currently have two mangoes. Initially, you had four mangoes if nothing changed, and you were merely testing. We always go with the most recent one.

You see what happened?

ChatGPT isn’t getting the situation. It’s not thinking. Instead, if this were human, they would have reason” Wait, she said 4, then 2 — something’s inconsistent here, let me reason through this.”

ChatGPT here follows: “Most recent statement = current truth.”

Not human-like reasoning. No lived context. Mostly stats-based judgment.

That’s your first clue.

Note: Different versions may react differently.

Understanding vs. Prediction: The Difference

Consider two children who are learning that “fire is hot.”

Through understanding: The first child touches something warm, feels sudden pain, recognizes danger, and links heat with harm. He has experienced it, so he knows what hot means.

Through prediction: Second child watches cartoons, sees 1,000 instances where the word “pain” is associated with the word “fire.” He never experienced what it means to be hot. Still avoided the heat because he recognized the pattern.

This second child is ChatGPT.

The “pattern recognition” is the foundation of ChatGPT.

It calculates, not experiences. In other words, humans mainly rely on lived experience. While AI primarily uses prediction.

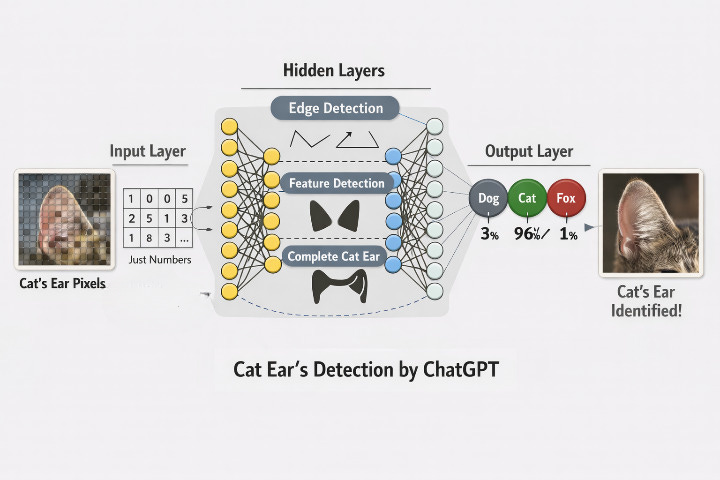

How ChatGPT Actually Processes (Using a Cat’s Ear Picture)

Let me show you how this really works. I promise to keep this gentle. Now let’s take a simple example. Teaching AI to identify a cat’s ear in a picture.

The image of a cat’s ear enters the input layer of the “neural network” as pixels (0.1, 0.9, 1.1, 0.2, 0.3).

I know this sentence feels a little heavy. So let’s break it down. Imagine this neural network as layers of tiny decision-makers that pass signals to each other, a little like how neurons in your brain communicate.

These pixels are tiny dots that make up an image. Each pixel is simply a number that tells how bright, how dark, or what color that tiny spot is.

So how does AI use these numbers to recognize a cat’s ear?

Let’s imagine that just a few pixels represent a cat’s ear(0.9, 0.2). However, AI is still unaware of which pixels are those.

Training Phase — Learning From Millions of Mistakes

The process begins at hidden layers in this way:

The AI starts with completely random weights(0.5, 0.4, 1.2, 1.3, 0.8). Weights instruct the AI which pixels to focus on. It’s just like noting down your task gives you clarity on which task to prioritize.

Now, these random weights are then multiplied by each pixel of the entered image. And then a bias is added. Think of this bias as a slight push to AI to go for a confirm yes or no instead of being confused in the middle. This way, AI reaches the final answer.

Following these, the first layer of the hidden layer looks for the simplest things. Like edges, curves, contrasts between light and dark pixels, and finds “pattern of edges”.

The second layer takes those edges and goes for shape and form identification. It asks whether these curves look like the shape of an ear. An eye? A nose?

The third layer steps back and looks at the whole picture. It checks if this combination features a cat’s ear, a dog’s, or a fox’s ear?

INITIAL ATTEMPT

The output layer gives probabilities using completely random weights:

• Dog’s ear → 96%

• Cat’s ear: 3%

• Fox’s ear → 1%

“Dog’s ear” ❌ Wrong

Here’s the beautiful part: when AI is wrong, it goes back and adjusts. Not random guessing. Not trying entirely different numbers. Instead, based on how incorrect it was, it makes tiny mathematical corrections.

After thousands of such corrections: new weights= (0.1, 1.9, 0.01, 2.0, 0.02)

Only a few weight rises in strength. The other weights shrank to nearly nothing.

The AI learned which pixels are important(0.9, 0.2). It is because these important pixels were multiplied by stronger weights(1.9, 2.0).

AFTER :

Following image processing, the output neuron gives probabilities:

Cat’s ear → 96%

• Dog’s ear → 3%

• Fox’s ear → 1%

Answer: “Cat’s ear” ✅ Correct!

It’s surprising, right? I was also amazed when I learned about this processing of AI.

Note: For better understanding, I have simplified the detection process. The actual mathematics involves Calculus, gradient descent, and other concepts. See resources such as Stanford’s CS231n course or “Deep Learning” by Goodfellow et al, for further in-depth technical information.

Back to Last night’s conversation

When I was talking to ChatGPT last night, calculations somewhat like a cat’s ear were going on. ChatGPT did not sense my tension when I told it I was overwhelmed. It calculated:

• Input: “overwhelmed + job applications”

• Pattern: Similar phrases in training data often followed by reassurance

• Prediction: “Take a breath. Let’s break this down...”

• Output: Comforting response

Although it looked like an understanding. Underneath, it’s just pattern recognition and probability.

AI doesn’t understand your pain.

It recognizes the words that often come with the word “pain”.

Even after knowing this, the comfort still helped.

That comfort was not from understanding, yet it gave me relief.

Maybe understanding isn’t always required to provide comfort.

Sometimes, prediction too can feel like empathy.

If such gentle explorations of AI resonate with you. I warmly welcome you to subscribe.

I write about simple AI concepts every other Wednesday, and about the human side of technology on alternate Fridays.

Let’s explore AI slowly.